Code Repository

The code of scripts will be available in the Q4 of 2025 on GitHub page.

Evaluation Tools

We provide 5 scripts to generate evaluation results:

- Error Table: A table of RMSE translation and rotation errors.

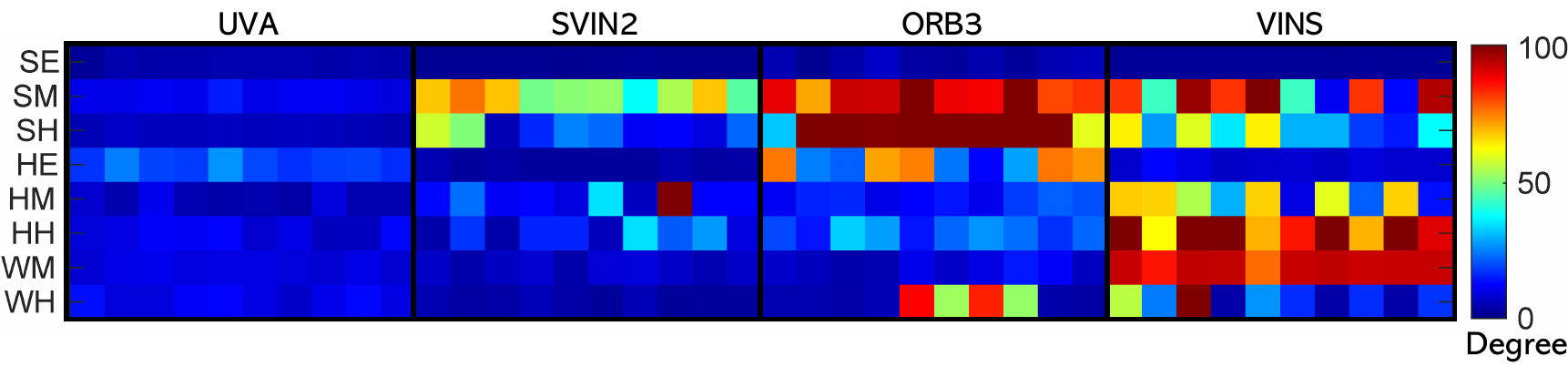

- Error Distribution Heat Map: A distribution plot of the RMSE translation and rotation errors of multiple runs to evaluate the robustness of a SLAM system.

- Error with Time: A figure that shows the pose errors in 6 axes evolving with time.

- Stereo Reconstruction: A 3D reconstruction using the estimated camera trajectory and the stereo depth.

- Trajectory Plot: A 3D plot of the estimated trajectory and GT trajectory.

Result Format

To use the convenient tools, the SLAM format should be save as the following format:

# timestamp tx ty tz qx qy qz qw

1653050828.546086072922 -0.001770058525 0.000278359631 -0.000904943742 0.000068303926 0.000966903164 0.000076414040 0.999999527297

1653050828.595986366272 -0.003527211945 -0.000320959232 -0.001085915391 -0.000569093969 0.001472297661 0.000584394569 0.999998583476

1653050828.647124767303 -0.003186839487 0.000752073134 -0.000408260033 0.000436202469 0.000805340200 0.000511369736 0.999999449828

File Structure

We follow the structure of rpg_trajectory_evaluation package. To use the convenient tools, the SLAM pose file should be format as the following structure:

<platform>

├── <alg1>

│ ├── <platform>_<alg1>_<dataset1>

│ ├── <platform>_<alg1>_<dataset2>

│ └── ......

└── <alg2>

│ ├── <platform>_<alg2>_<dataset1>

│ ├── <platform>_<alg2>_<dataset2>

├── ......

......

Evaluation Results

We evaluate the performance of four SLAM systems, i.e., Underwater Visual Acoustic SLAM (UVA), SVIN2, ORB SLAM3 and VINS-Fusion, on the proposed Tank dataset by reporting the generated results using the evaluation tool set.

Error Table

The RMSE absolute errors are presented in following Table. UVA demonstrates the best performance in translation error across most sequences, attributed to the integration of the DVL. Regarding the rotation error, SVIN2 outperforms on less challenging sequences, while UVA excels in more challenging ones. ORB-SLAM3 performs well on the SE sequence but loses track in more challenging scenarios. VINS-Fusion also performs adequately on the SE sequence, but drifts rapidly in other sequences.

| Translation Error (in meter) | Rotation Error (in degree) | |||||||

|---|---|---|---|---|---|---|---|---|

| Sequence | UVA | SVIN2 | ORB3 | VINS | UVA | SVIN2 | ORB3 | VINS |

| Structure Easy | 0.178 | 0.090 | 0.199 | 0.219 | 4.109 | 1.756 | 4.183 | 2.321 |

| Structure Medium | 0.489 | 2.111 | 3.494 | 42359.413 | 10.982 | 57.510 | 90.014 | 65.957 |

| Structure Hard | 0.432 | 3.589 | 2.933 | 5165.797 | 5.923 | 23.439 | 102.615 | 38.244 |

| HalfTank Easy | 1.121 | 4.508 | 2.213 | 26.930 | 19.827 | 3.456 | 48.613 | 8.435 |

| HalfTank Medium | 0.256 | 2.902 | 0.708 | 15772.612 | 5.935 | 24.153 | 15.159 | 45.991 |

| HalfTank Hard | 0.367 | 68.703 | 1.147 | 31722.982 | 9.850 | 15.647 | 22.236 | 92.070 |

| WholeTank Medium | 0.267 | 0.406 | 0.682 | 4.106 | 9.202 | 6.578 | 8.195 | 91.497 |

| WholeTank Hard | 0.297 | 0.274 | 2.370 | 35999.266 | 10.697 | 3.796 | 30.504 | 28.585 |

Error Distribution Heat Map

The error distribution heat map, presented in the following figure, illustrates the error deviations across 10 runs for each method. The UVA method demonstrates the highest robustness across the majority of the sequences.